Artificial intelligence is no longer limited to massive cloud data centers. Today, AI is rapidly expanding into edge computing applications — from autonomous vehicles and robotics to smart retail solutions and industrial automation. Driving this transformation is a new generation of hardware: AI computing motherboards for edge AI.

These specialized AI motherboards are engineered to handle machine learning inference, computer vision, and real-time data analytics directly at the source, without relying on constant cloud connectivity. In this guide, we’ll break down what an AI computing motherboard is, why it’s critical for next-generation AI deployment, and how to choose the right board for your business or industry application.

An embedded system board designed especially for workloads involving artificial intelligence is known as an AI computing motherboard. AI computing motherboards incorporate specialized AI processors like the following, in contrast to conventional motherboards that mostly use CPUs:

By leveraging these processors, AI motherboards deliver high-performance edge AI capabilities that accelerate tasks like image recognition, predictive analytics, speech and natural language processing (NLP), and real-time decision-making — all without depending on continuous cloud connectivity.

Running AI models locally — especially in real-time applications — demands more than raw CPU power. It requires:

Standard PC motherboards aren’t optimized for these needs. AI computing motherboards close this performance gap, making them vital for robotics, smart surveillance, digital signage, and healthcare AI solutions.

According to MarketsandMarkets (2023), AI inference at the edge is projected to grow at a 26% CAGR through 2028.

1. Integrated AI Acceleration (GPU/NPU/TPU)

Modern AI boards ship with on-chip AI accelerators that drastically boost inference performance.

| Accelerator Type | Example Vendor | Best Use Case | Key Advantage |

| NPU | Rockchip, Hailo | Vision processing, IoT | Ultra-low power AI inference |

| GPU | NVIDIA, AMD | AI training, 4K rendering | High-parallel workloads |

| TPU | Google Edge | ML model inference | Tensor-level optimization |

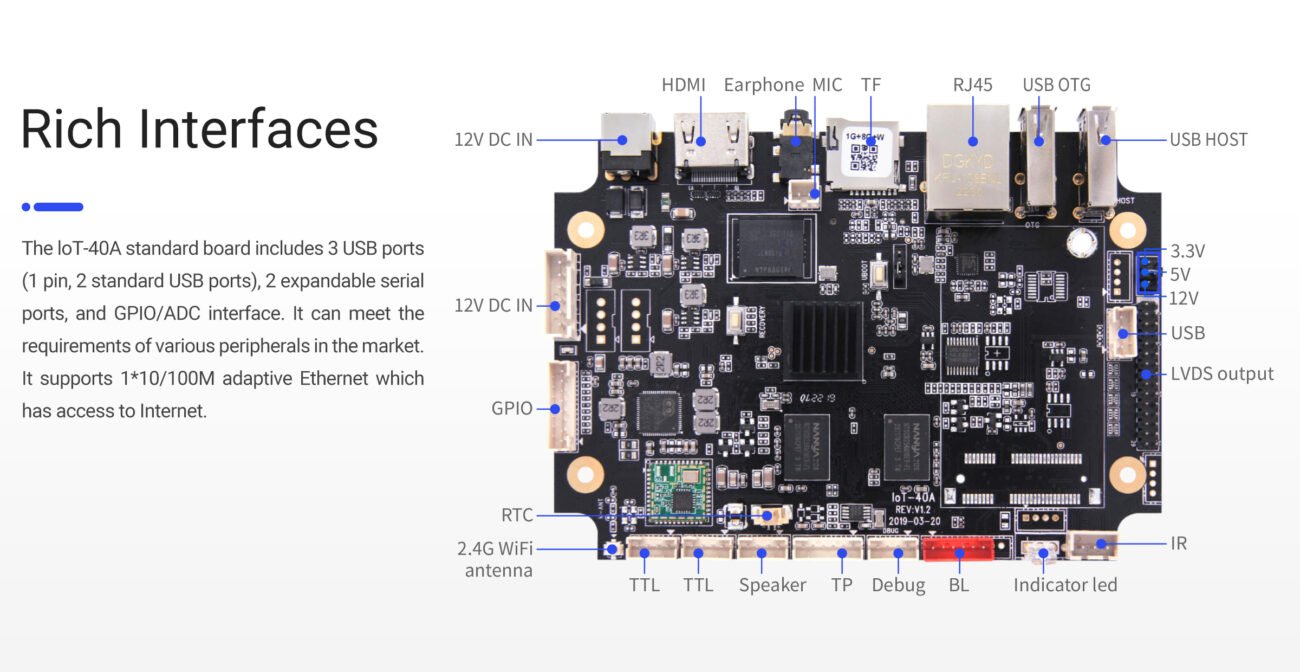

2. I/O and Connectivity for AI Applications

AI deployments often involve multi-device input/output. Leading AI motherboards support:

Example use case: Smart Retail Solutions with AI Vision System

3. Power Efficiency and Thermal Design

Edge AI requires energy-efficient, rugged solutions. Industrial AI motherboards are engineered to:

At ShiMeta Devices, we offer fanless AI motherboards with extended lifecycles, ideal for embedded OEMs and industrial AI integration.

When selecting an AI motherboard for edge deployment, evaluate:

Pro Tip: Always ensure compatibility with Linux SDKs for AI, Android NNAPI, and OTA update support for long-term scalability.

| Feature | Consumer-Grade | Industrial-Grade |

| Temperature Range | Limited | Extended (-20°C to +60°C) |

| Lifecycle | 1–2 years | 5–7 years guaranteed |

| Reliability | Moderate | EMC/ESD certified |

| Support | Minimal | Full OEM support + RMA |

For mission-critical projects, industrial AI motherboards deliver higher stability, compliance, and ROI.

At ShiMeta Devices, we design AI computing motherboards optimized for OEMs, robotics, healthcare, and industrial automation.

Our solutions feature:

Edge computing, where real-time processing takes place locally on the device rather than relying on the cloud, is the way of the future for AI. AI computing motherboards are at the center of this revolution, serving as the basis for edge machine learning, speech recognition, computer vision, and predictive analytics.

Whether you are creating intelligent retail solutions, healthcare AI systems, smart kiosks, or autonomous robots, selecting the appropriate AI motherboard for edge AI applications is essential. A critical component of next-generation AI projects’ success, the correct hardware guarantees optimal performance, system stability, and long-term return on investment.

An AI computing motherboard is an embedded system board designed specifically for AI workloads. It incorporates specialized AI processors like NPU (Neural Processing Unit), GPU, or TPU to handle machine learning inference, computer vision, and real-time data analytics at the edge.

NPUs are optimized for ultra-low power AI inference, ideal for IoT and vision processing. GPUs offer high-parallel computing power for AI training and complex rendering. Choose NPU for edge AI inference, GPU for AI training or 4K+ workloads.

Yes. AI computing motherboards are designed for edge computing, meaning AI models run locally on the device without requiring constant cloud connectivity. This enables real-time inference and reduces latency.

Edge AI refers to deploying AI algorithms and models on local devices (at the “edge” of the network) rather than in cloud data centers. This enables faster response times, better privacy, and reduced bandwidth requirements.

Key factors: AI accelerator type (NPU/GPU/TPU), memory (4-16GB), connectivity (USB, Ethernet, PCIe), video outputs (HDMI, eDP), and ecosystem support (TensorFlow, PyTorch, OpenVINO).