As artificial intelligence (AI) shifts from cloud to edge, businesses are seeking ways to deploy intelligent systems that are not only powerful but also compact, fanless, and energy-efficient. Whether it’s a smart retail kiosk, a security access terminal, or a health screening device, energy-efficient AI terminals are becoming a necessity—not just a feature.

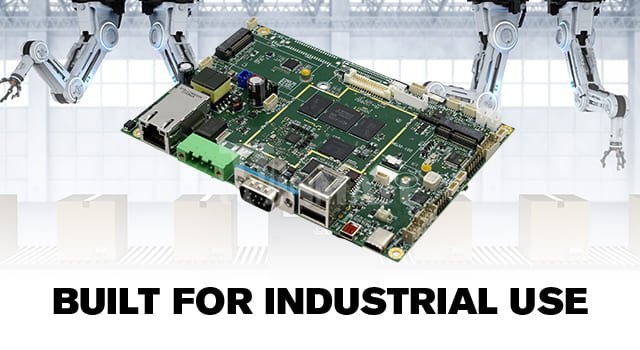

At the heart of these innovations is the ARM motherboard: a compact, low-power, and increasingly AI-ready computing platform that enables high-performance inference on the edge without breaking power or thermal budgets.

In this article, we’ll guide you step-by-step through how to build an energy-efficient AI terminal using ARM-based motherboards, including best practices for hardware, software, power optimization, and real-world applications.

The Rise of Edge AI

From contactless check-in kiosks to smart vending machines, AI at the edge is reshaping how machines interact with people. By running inference locally (on-device), edge AI terminals offer:

However, edge devices often face power and thermal constraints, especially in enclosed, unattended, or mobile environments. That’s where ARM motherboards excel.

ARM SoCs (System on Chips) offer:

This makes them ideal for smart terminals where space, energy, and cooling are at a premium.

Let’s start by breaking down the essential hardware required to build a fully functional AI terminal using an ARM motherboard.

1. Choosing the Right ARM SoC

Look for ARM-based boards with SoCs that include:

Recommended SoCs:

2. RAM and Storage

3. Display and Touch Interface

If your terminal is interactive, support for HDMI, LVDS, or eDP is critical, along with USB capacitive touch or I²C touch panels.

4. Cameras and Sensors

5. Power Supply

Building an AI terminal doesn’t stop at hardware. Let’s walk through the software side:

1. Operating System

Most ARM boards support:

ShiMeta offers customizable Linux BSPs for its ARM platforms, tailored for AI deployment.

2. AI Frameworks and Toolkits

Depending on your SoC, choose AI toolkits that support hardware acceleration:

| Toolkit | Supported Boards | Features |

| TensorFlow Lite | Most ARM boards | Lightweight, good for MobileNet/EfficientNet |

| RKNN Toolkit | RK3399Pro, RK3588 | Rockchip’s native AI toolkit for NPU |

| ONNX Runtime | RK3588, i.MX 8M Plus | Run models trained in PyTorch, TensorFlow |

| OpenVINO | i.MX 8 series | Intel’s optimized inference toolkit (on ARM) |

Convert and optimize models before deployment to reduce compute load and memory usage.

3. Inference Optimization Tips

4. Device Management

Use remote device management platforms like:

These tools let you control large fleets of AI terminals remotely, with minimal overhead.

Energy efficiency isn’t just about the chip — it’s about smart system design.

Hardware-Level Optimization

Software-Level Power Saving

AI Model Optimization

Smart Workload Scheduling

Only run inference when needed:

This ensures you’re not burning power unnecessarily.

Let’s explore how energy-efficient ARM-based AI terminals are deployed across industries:

Smart Retail & Vending Machines

Access Control & Facial Recognition

Smart Healthcare Kiosks

Environmental Monitoring Stations

ShiMeta i.MX 8M Plus Board

As AI terminals become more embedded in our daily lives—from shopping to security to diagnostics—the need for compact, low-power, and intelligent systems has never been greater.

By choosing the right ARM motherboard and optimizing your hardware and software stack, you can deploy AI terminals that are:

Whether you’re building one prototype or deploying thousands of terminals globally, ShiMeta Devices offers customizable ARM motherboards that combine industrial-grade performance with open AI flexibility.